Astronomy Magazine – Your source for the latest news on astronomy, observing events, space missions, and more.

Picture of the Day

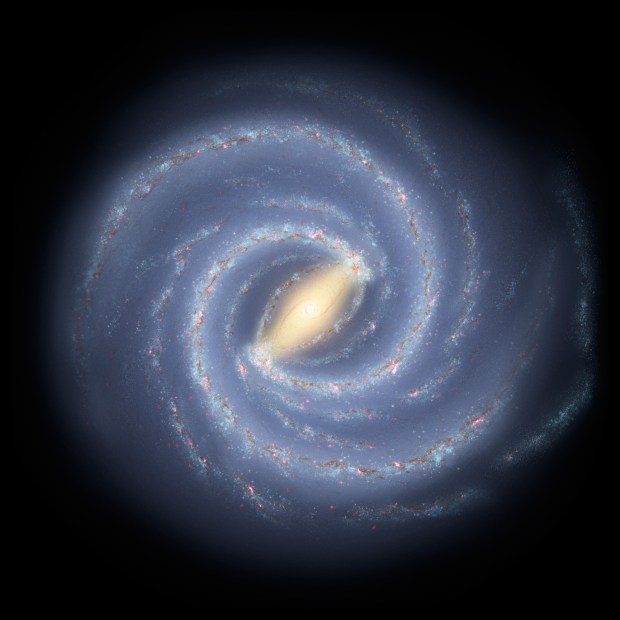

The colors of the Cave

Steve Leonard, taken from Markham, Ontario

The Cave Nebula (Sharpless 2–155) is an object that features emission, reflection, and dark nebulae. This Hα/OIII/SII image with exposures of 10, 13, and 6 hours, respectively, taken with a 4.5-inch refractor, was processed as a blend of a static Hubble-palette rendition and a dynamic Foraxx-palette combination of channels.