Imaging at this rate will generate about 15 terabytes (15 trillion bytes) of raw data per night and 30 petabytes over its 10-year survey life. (A petabyte is approximately the amount of data in 200,000 movie-length DVDs.) Even after processing, that’s still a 15 PB (15,000 TB) store.

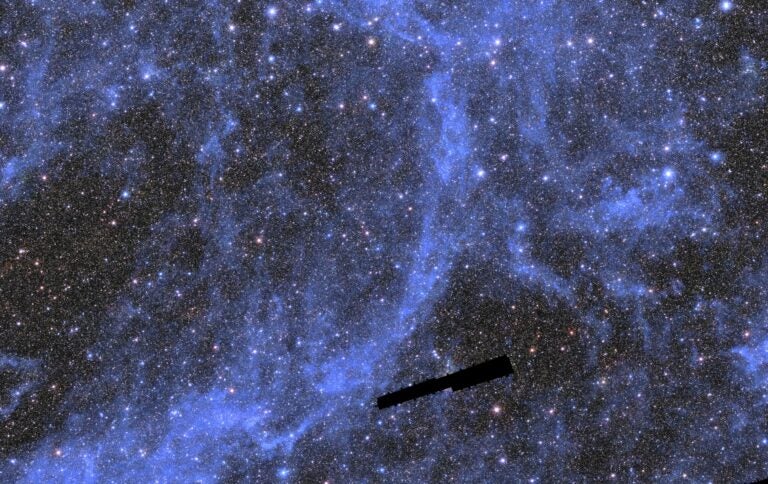

Such huge datasets will give astronomers a ten-year time-lapse “movie” of the southern sky, yielding new subject matter for time-domain studies and a deeper understanding of the dynamic behavior of the universe. It will also change the way science is done – astronomer-and-telescope is giving way to astronomer-and-data as an engine of new knowledge.

Preparing the information

The LSST’s biggest strength may be its ability to capture transients – rare or changing events usually missed in narrow-field searches and static images. The good news is that software will alert astronomers almost immediately when a transient is detected to enable fast follow-up observations by other instruments. The not-so-good news is that up to 10 million such events are possible each night. With detection rates like these, good data handling is essential.

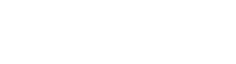

Nightly processing will subtract two exposures of the each image field to quickly highlight changes. The data stream from the camera will be pipeline processed and continuously updated in real time, with a transient alert triggered within 60 seconds of completing an image readout.

Data complied into scheduled science releases will get considerable reprocessing to ensure that all contents are consistent, that false detections are filtered and that faint signal sources are confirmed. Reprocessing will also classify objects using both standard categories (position, movement, brightness, etc.) and dimensions derived mathematically from the data themselves. Products will be reprocessed at time intervals from nightly to annually, which means that their quality will improve as additional observations are accumulated.

Preparing the science

The LSST program includes Science Collaborations, teams of scientists and technical experts that work to grow the observatory’s science agendas. There are currently eight collaborations in such areas as galaxies, dark energy and active galactic nuclei. One of the most unique, however, is the Informatics and Statistics Science Collaboration (ISSC) which, unlike other teams, doesn’t focus on a specific astronomy topic but cuts across them all. New methods will be needed to handle heavy computational loads, to optimize data representations, and to guide astronomers through the discovery process. The ISSC focus is on such new approaches to ensure that astronomers realize the best return from the anticipated flood of new data.

“Data analysis is changing because of the volume of data we’re facing,” says Kirk Borne, an astrophysicist and data scientist with Booz Allen Hamilton, and a core member of the ISSC. “Traditional data analysis is more about fitting a physical model to observed data. When I was growing up, we didn’t have sample sizes like this. We were trying to understand a particular phenomenon with our small sample sets. Now, it’s more unsupervised. Instead of asking ‘tell me about my model,’ you ask ‘tell me what you know.’ Data become the model, which means that more is different.”

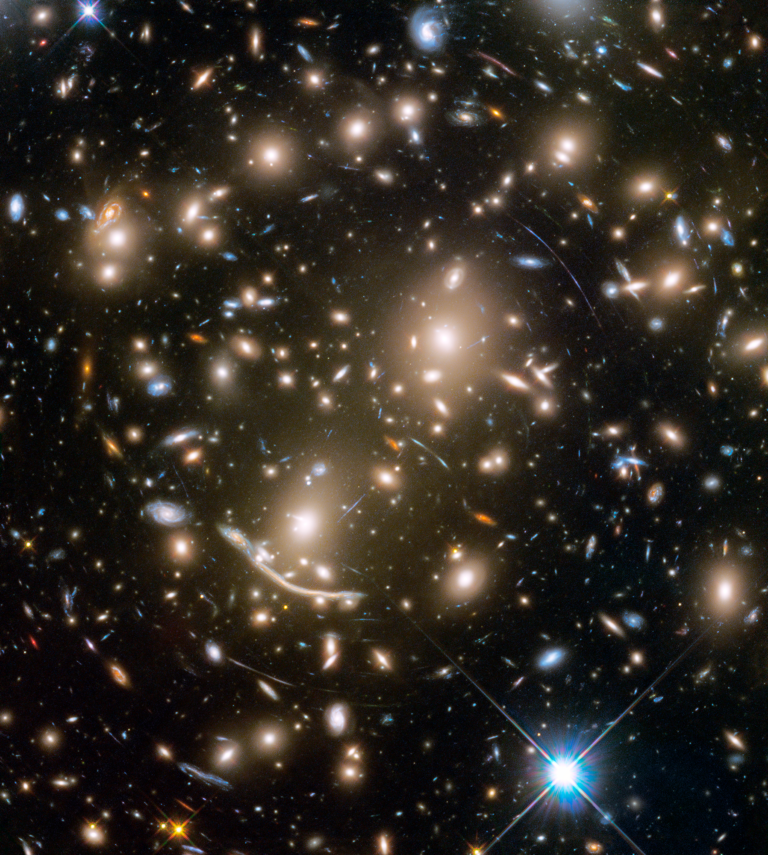

LSST data will almost certainly expand the chances for surprise. “When we start adding different measurement domains like gravitational wave physics and neutrino astrophysics for exploration,” adds Borne, “we start seeing these interesting new associations. Ultraluminous infrared galaxies are connected with colliding starbursting galaxies, for example, but it was a discovery made by combining optical radiation with infrared. Quasars were discovered when people compared bright radio observations of galaxies with optical images of galaxies.”

The LSST Data Management Team is starting to orient the astronomy community to what’s coming with a series of conferences and workshops. “We try to cover as many meetings as we can, giving talks and hosting hack sessions,” says William O’Mullane, the team Project Manager.

Science notebooks, which allow users to collaborate, analyze data and publish their results online, will be an integral tool for LSST research communities and one that’s being introduced early. “We rolled out Jupyterlab [an upgraded type of science notebook] at a recent workshop,” he adds, “which is a much faster way to get people working with the stack [the image manipulation code set].”

The next generation of big data astronomers is also being groomed through graduate curricula and a special fellowship program. “Getting students involved early is a very good thing, both for the field and for them,” says Mario Juric, Associate Professor of Astronomy at the University of Washington, and the LSST Data Management System Science Team Coordinator. “Students need to understand early on what it’s like to do large-scale experiments, to design equipment and software, and to collaborate with very large teams. Astronomy today is entering the age of big data just like particle physics did 20 or 30 years ago.

“We also have a Data Science Fellowship Program,” adds Juric, “a cooperative effort a few of us initiated in 2015 to educate the next generation of astronomer data scientists through a two-year series of workshops.” The program is funded by the LSST Corporation, a non-profit organization dedicated to enabling science with the telescope, and student interest has been intense. Only about a dozen people were admitted from among 200 applicants in a recent selection cycle.

The future IS the data

The LSST will cement an age where software is as critical to astronomy as the telescope. “When I was in graduate school,” says Juric, “I worked on the Sloan Digital Sky Survey (SDSS) and I didn’t touch a telescope; I did all my research out of a database. I know many students who have done the same. So we’re already seeing that kind of migration.”

O’Mullane would agree. “Large surveys like SDSS, Gaia and now LSST provide enough data for a different approach,” he says. “Astronomers are not always reaching for a telescope. In fact, missions like LSST basically only offer you the archive; you can’t even request the observatory to make a specific observation.”

And, because more people will have ready access to those data, the biggest discoveries may come not only from the professionals, but from dedicated amateurs working at home on their laptops.