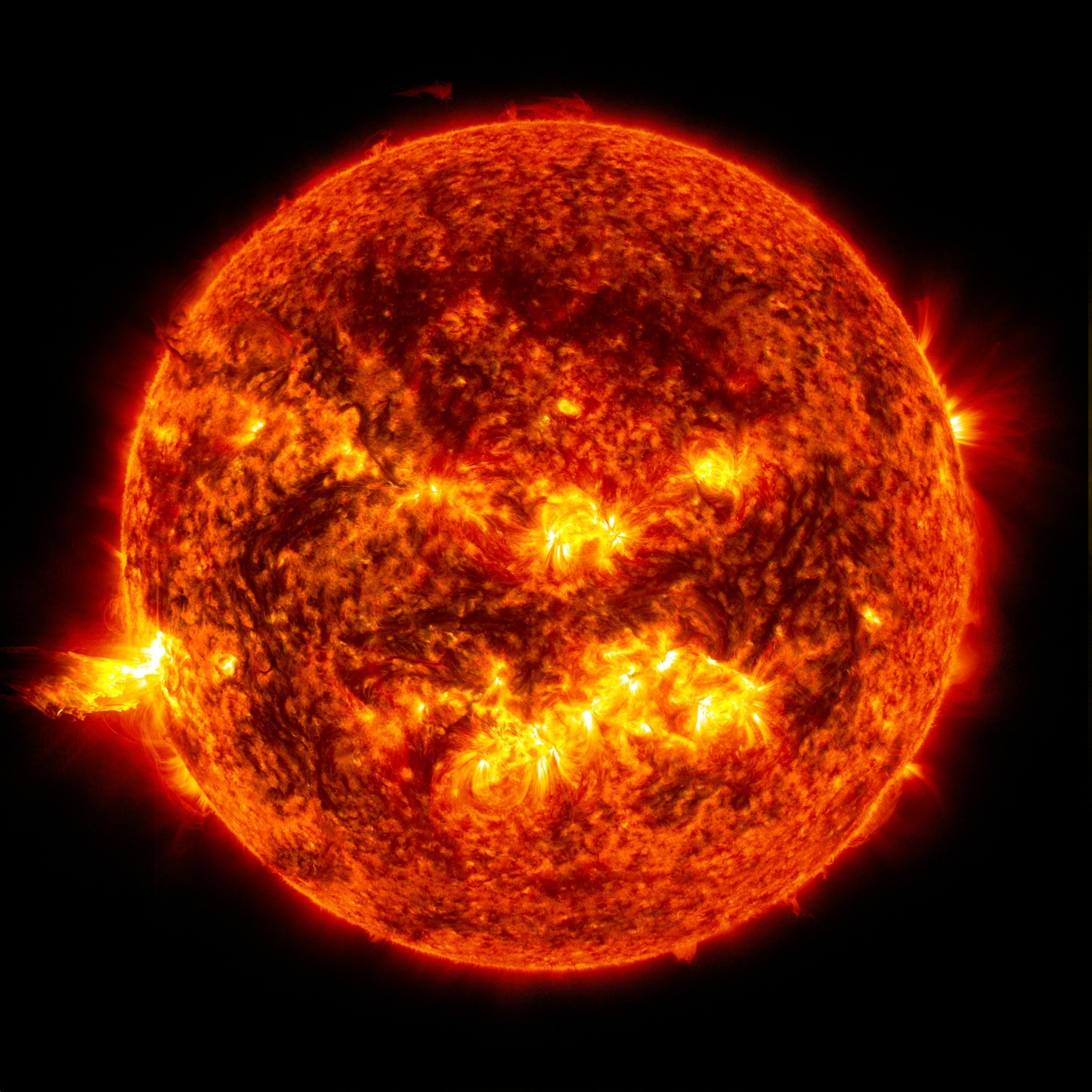

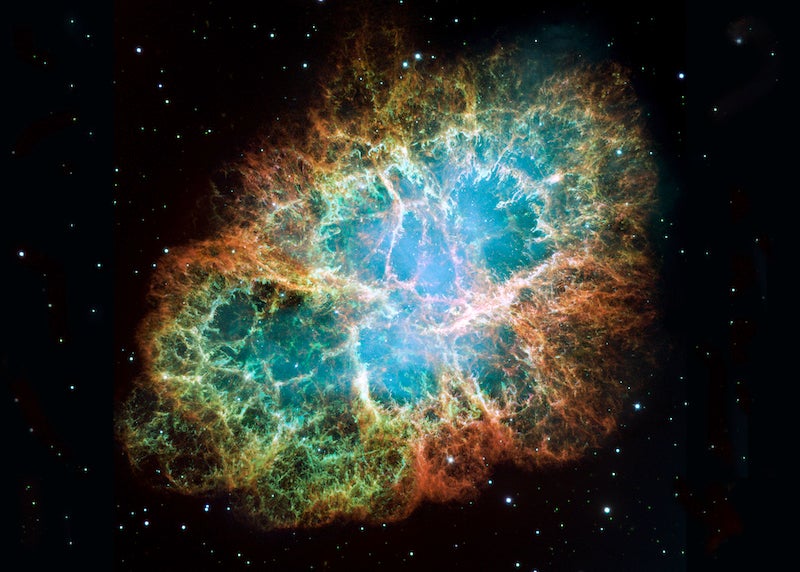

The universe’s first stars were formed from the collapse of molecular clouds holding little more than hydrogen, helium, and lithium — the main elements created in the Big Bang. When these stars died, the elements created within them through stellar nucleosynthesis — the nuclear reactions that keep stars balanced against gravitational collapse — were jettisoned into space, where they enriched interstellar molecular clouds. Later stars formed from these clouds, thereby incorporating large quantities of heavier elements, increasing their metallicity.

Since planets form at the same time as their parent stars (demonstrated in the animation below), they all reflect the metallicity of the nebulae that forged them. And, like stars, planets will also have compositions dependent on where and when they form within our Milky Way Galaxy. Old stars — formed from ancient material very poor in metals — ought to breed relatively low-mass terrestrial planets. Conversely, more recent generations of stars — formed from relatively metal-rich nebulae — should harbor comparatively high-mass earthlike planets. The optimal range in metallicity for stars to spawn earthlike worlds, according to astronomers who subscribe to the concept of the Galactic Habitable Zone, is between 20 percent and 200 percent of the sun’s total mass. The region in our galaxy most likely to hold such stars extends 10,000 or so light-years on either side of the sun’s orbit about the galactic center — the Galactic Habitable Zone.