Millions of Americans were glued to their televisions on the morning of January 28, 1986 to watch the Challenger space shuttle launch from the Kennedy Space Center in Merritt Island, Florida.

Networks like CNN alternated between audio from the excited correspondent covering the event and the calm voice of Steve Nesbitt, NASA’s public affairs officer at the Johnson Space Center in Houston.

Viewers heard the correspondent count down and saw the three main engines ignite. “We have liftoff!” the correspondent confirmed as the shuttle soared into the sky.

A bit of static brought in Nesbitt’s voice as he noted the Challenger properly rolled, a technique used to reduce stress on the wings. Viewers listened to the roar of the engines while Nesbitt described them as operating as expected.

The Challenger arched in the sky, and the blazing back end of the engines filled the screen. Nesbit continued to update the positioning. Suddenly, the camera went blurry and the screen filled with white smoke and flashing flames.

After a pause, the correspondent suggested the rocket boosters used during the launch had blown away from the spacecraft. “Flight controller is looking very closely at the situation,” Nesbitt stated calmly. “Obviously a major malfunction.”

The camera panned down as streams of smoke plummeted toward the ocean. The voices went silent. Then, Nesbitt confirmed the malfunction. The country later learned that the seven astronauts aboard died.

Understanding what went wrong

The government quickly launched an investigation into why the Challenger exploded. During liftoff, two rocket boosters were meant to provide the space shuttle with the necessary thrust. These boosters’ joints had been sealed by rubber O-Rings, which were designed to seal the gap and prevent hot gases from leaking.

The morning of the launch was unusually cold for Florida — just 36 degrees Fahrenheit at the time of the launch. The night before the big event, engineers warned the temperatures might be too cold for the O-Rings and weaken their seal.

The launch was delayed for two hours to allow ice to melt off the launch pad, but it wasn’t enough to prevent disaster. The O-Rings failed, hot gases escaped the rocket booster and an explosion ensued.

Government inquiries confirmed that the decision to move ahead with the questionable O-Rings technically followed NASA protocol. There was no organizational misconduct. No rules were broken. No one lied or falsified records. The next question —which was harder to answer: how such a catastrophe could occur in absence of any clear wrongdoing.

Isolating the cause

Social scientists initially cited the concept of groupthink as to why NASA scientists thought the Challenger’s O-Rings were safe to fly in cold weather. Yale University social psychologist Irving Janis introduced this theory in the 1970s. He wrote that cohesive groups develop a mode of thinking in order to maintain agreement and limit friction. This motivates members to prioritize harmony whenever faced with a problem or challenge.

Janis described eight symptoms of groupthink, including overconfidence, collective rationalization, self-censorship, and the tendency to stereotype perceived outsiders.

Social scientists applied Janis’ eight symptoms to the Challenger launch and found significant similarities. For example, the engineers who warned against the O-Ring cold-weather defect were hired from an outside firm and subject to outsider stereotypes. And NASA employees were also overconfident — they had never experienced an in-flight death before.

The groupthink theory dominated until 1996, when sociologist Diane Vaughan published The Challenger Launch Decision and introduced the concept of “normalization of deviance,” a behavior, she warned, that could crop up within any organization.

Normalization of deviance, Vaughan says, is an organizational phenomenon. For NASA, the concerns with the O-Rings began in the late 1970s. Problems were identified, then fixed. New problems arose and those were fixed as well. “That continued until accepting the failure was normal and routine for them,” Vaughan says.

In the moment, however, the organization didn’t feel as though the system was failing. Every prescribed procedure was followed and every box checked. NASA managers had deemed the O-Rings an “acceptable risk.”

“They developed a cultural belief: If they did everything possible, followed all the rules, it would be safe to fly,” Vaughan says.

Both internal and external reviews and years of documentation played into this culture of confidence. In her book, Vaughan wrote that “…the cause of the disaster was embedded in the banality of organizational life.”

NASA’s paper trail showed that the night before the launch, the engineers’ concerns about the cold weather’s effect on the O-Rings were noted and considered. But given that the O-Rings had always been considered a problem, NASA wasn’t alarmed.

Years’ worth of recorded proof indicated the O-Rings had repeatedly showed flaws that were subsequently fixed, including the previous year when the O-Rings eroded after a cold-weather launch in January 1985. The O-Rings had worked then, and NASA managers assumed they would work again.

“My investigation found that they didn’t actively argue for a launch they knew was risky, but they were surprised as everyone else because they thought it was safe to fly,” Vaughan says.

Fixing grave errors

In the aftermath of the Challenger explosion, Vaughan says NASA made substantial changes to procedures, decision-making processes, and the technology used. The organizational culture, however, remained the same.

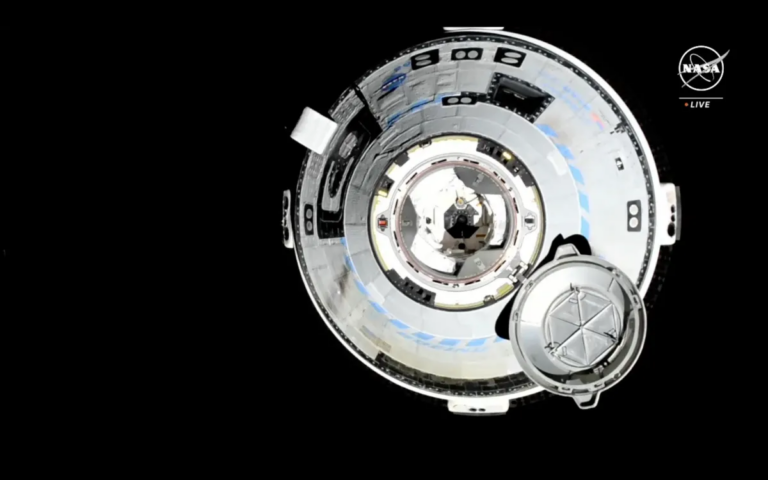

Then, seven astronauts died when the space shuttle Columbia disintegrated on February 1, 2003 as it reentered the atmosphere. An investigation found that a large, suitcase-sized piece of spray foam broke from an external fuel tank during launch and hit the left wing. As the vehicle approached Earth, plasma penetrated the damaged wing and caused the rest of the shuttle to break apart.

The spray foam had presented a problem in four past launches. Each time, the situation was accessed and addressed. The risk, once again, had become normalized. “The problem with Columbia was because the problems with Challenger had never been fixed,” Vaughan says.