The ancient Milky Way abounded with stars a few times more massive than the Sun, say astronomers in Italy and the United States. These stars left their mark by showering their stellar companions with carbon, barium, and other elements.

Theorists reason as follows. Stars form from clouds of cold gas. Carbon and oxygen cool the gas, allowing it to fragment into smaller clouds that create smaller stars. However, the ancient galaxy had little carbon or oxygen. Thus, the gas did not easily fragment, so the ancient Milky Way gave birth to a greater proportion of massive stars.

Unfortunately, observational evidence in support of this idea has been scant. That’s because the galactic halo — the Milky Way’s oldest component — is about 13 billion years old, and stars more massive than the Sun don’t live that long. So any such stars that arose in the halo are dead and gone.

Now, Sara Lucatello, Raffaele Gratton, and Eugenio Carretta of the Astronomical Observatory of Padova and Timothy Beers of Michigan State University have studied the stars that orbited some of those deceased halo stars. They report what they call “the first direct observational evidence that the IMF [initial mass function] of stars is not universal over time.” The astronomers will publish their work in an upcoming issue of The Astrophysical Journal.

Lucatello and her colleagues studied stars in the galactic halo with extremely low abundances of iron — less than 1/300 the Sun’s abundance. These stars formed long ago, before supernovae had contributed much iron to the Milky Way. As a result, the stars acquired little iron at birth.

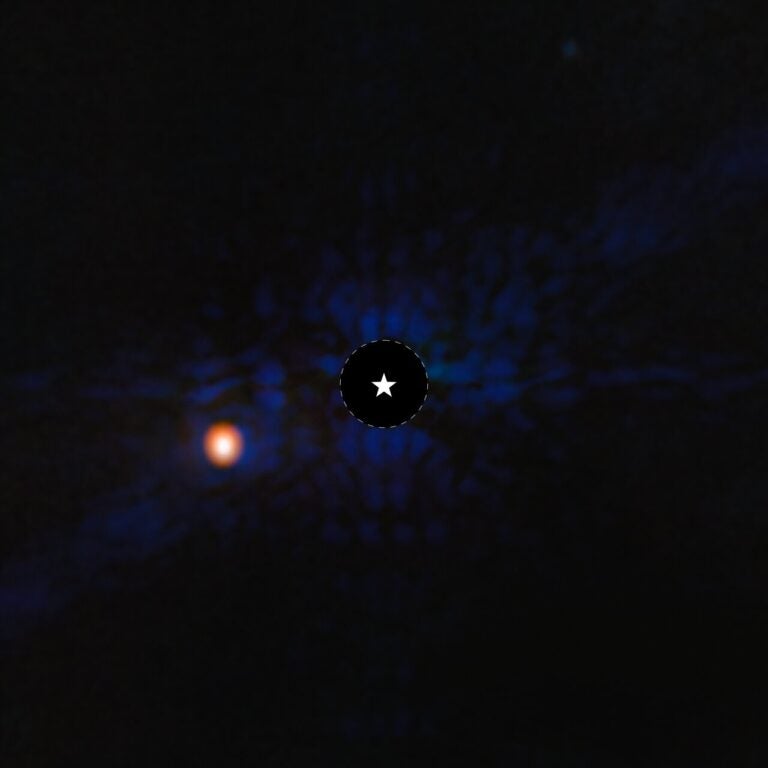

However, about 20 percent of these extremely iron-poor stars have high levels of carbon and barium relative to iron. As Lucatello and her colleagues report in another paper to appear in The Astrophysical Journal, these carbon- and barium-rich stars are double, orbited by white dwarf stars.

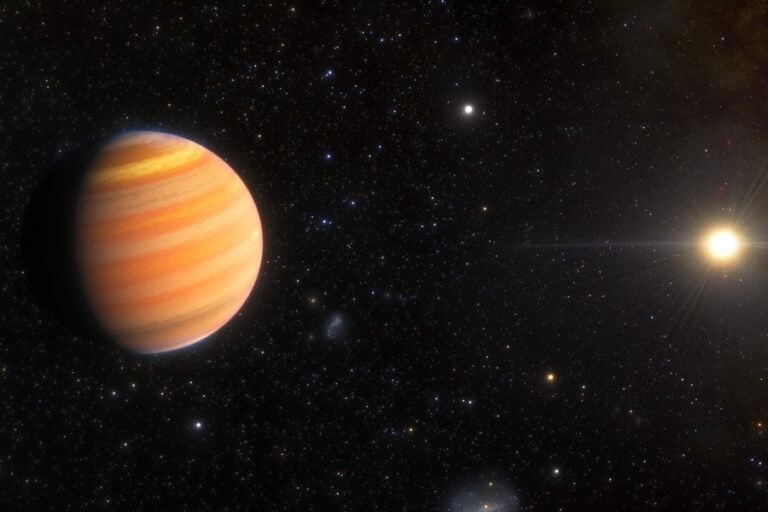

The white dwarfs were once more massive stars, probably born with 1.5 to 6 times the Sun’s mass. Modern examples of such stars include Vega and Regulus. As they age, these stars expand into red giants.

Red giants create most of the universe’s carbon. They also manufacture weightier elements, such as barium (atomic number 56), by releasing a slow flux of neutrons that transforms iron nuclei into heavier ones. In addition to barium, this so-called s-process (“s” for “slow”) forges many other elements, including strontium (atomic number 38), yttrium (atomic number 39), zirconium (atomic number 40), and lead (atomic number 82).

Red giants do not explode. Instead, they die by casting off their outer atmospheres, which enrich companion stars with carbon and s-process elements. These companions are the iron-poor but carbon- and barium-rich stars that Lucatello’s team studied in the galactic halo.

Stars with high abundances of carbon and s-process elements relative to iron are much more common in the galactic halo than in the galactic disk. Thus, the astronomers say, the ancient Milky Way formed a much greater proportion of its stars with masses between 1.5 and 6 times the Sun’s. About 5 percent of all modern stars are born in this mass range — but for the ancient Milky Way, the fraction was roughly 40 percent.

“It’s certainly a fundamental problem: What was the mass function of the very metal-poor stars — the earliest stars to form?” asks halo-star expert Bruce Carney of the University of North Carolina at Chapel Hill. “We don’t have any witnesses to that particular event — all we have are clues and circumstantial evidence — and frankly, I think this is one of the more novel approaches to the whole issue.”

The new work may explain a long-standing puzzle. Most of the Milky Way’s globular clusters belong to the halo, but while astronomers know of 1,000 halo stars with iron abundances less than 1/300 that of the Sun, there’s not a single globular cluster so iron-poor. In fact, M15 in Pegasus and M92 in Hercules, both with 1/200 of the Sun’s iron abundance, are two of the most extreme globulars.

Why don’t clusters with lower iron abundances exist? Lucatello and her colleagues say it’s because the clusters destroyed themselves. Extremely iron-poor clusters contained lots of stars with 1.5 to 6 solar masses. When these stars shed their outer atmospheres, most of the gas escaped the clusters, robbing the clusters of mass — and thus of the gravity that held them together. As a result, the clusters split apart, spilling their stars into the galaxy at large. That’s why, at very low iron abundances, the Milky Way has stars but no clusters.