When most people picture an astronomer, they think of a lone person sitting on top of a mountain, peering into a massive telescope. Of course, that image is out of date: Digital cameras have long since done away with the need to actually look though a telescope.

But now the face of astronomy is changing again. With the advent of more powerful computers and sky surveys that generate unimaginable quantities of data, artificial intelligence is the go-to tool for the keen researcher of space. But where is all of this data coming from? And how can computers help us learn about the universe?

AI’s appetite for data

Chances are you’ve heard the terms “artificial intelligence” and “machine learning” thrown around recently, and while they are often used together, they actually refer to different things. Artificial intelligence (AI) is a term used to describe any kind of computational behavior that mimics the way humans think and perform tasks. Machine learning (ML) is a little more specific: It’s a family of technologies that learn to make predictions and decisions based on vast quantities of historical data. Crucially, ML creates models which exhibit behavior that is not pre-programmed, but learned from the data used to train it.

The facial recognition in your smartphone, the spam filter in your emails, and the ability of digital assistants like Siri or Alexa to understand speech are all examples of machine learning being used in the real world. Many of these technologies are now being used by astronomers to investigate the mysteries of space and time. Astronomy and machine learning are a match made in the heavens, because if there’s one thing astronomers have too much of — and ML models can’t get enough of — it’s data.

We’re all familiar with megabytes (MB), gigabytes (GB), and terabytes (TB), but data at that scale is old news in astronomy. These days, we’re interested in petabytes (PB). A petabyte is about one thousand TB, a million GB, or a billion MB. It would take around 10 PB of storage to hold every single feature-length movie ever made in 4K resolution — and it would take over a hundred years to watch them all.

The Vera C. Rubin Observatory, a new telescope under construction in Chile, will be tasked with mapping the entire night sky in unprecedented detail, every single night. Over a 10-year survey, Vera Rubin will produce about 60 PB of raw data — studying everything from asteroids in our solar system, to galaxies in the distant universe. No human being could ever hope to analyze all that data — and that’s from just one of the next-generation observatories being built, so the race is on among astronomers in every field to find new ways to leverage the power of AI.

Planet hunters

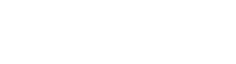

One area of astronomy where AI has made a significant impact is in the search for exoplanets. There are many ways to look for their signals, but the most productive methods with current technology usually involve studying the variation of a star’s brightness over time. If a star’s light curve shows a characteristic dimming, it could be a sure sign of a planet transiting in front of the host star. Conversely, a phenomenon called gravitational microlensing can cause a large spike in a star’s brightness, when the exoplanet’s gravity acts as a lens and magnifies a more distant star along the line of sight. Detecting these dips and spikes means sifting through millions of light curves, studiously collected by space telescopes like NASA’s Kepler and TESS (Transiting Exoplanet Survey Satellite).

Using the huge libraries of observed light curves, astronomers have been able to develop ML-based models that can outperform humans in the search for exoplanets. But AI can do much more than just find exoplanets: It can also lead astronomers to new insights into how those techniques work.

In a paper published May 23 in Nature Astronomy, a team of researchers reported that ML algorithms had helped them discover a more elegant understanding of exoplanet microlensing, unifying multiple interpretations of how the exoplanet’s configuration with its host star might vary. The report came just months after researchers at DeepMind reported in Nature new AI-aided fundamental insights into mathematics.

Astronomers also hope that in the near future, machine learning will help them identify which planets might be habitable. Using next-generation observatories like the Nancy Grace Roman Telescope and James Webb Space Telescope (JWST), astronomers intend to use ML to detect water, ice, and snow on rocky planets.

Galactic forgeries

While many ML models are trained to distinguish between different types of data, others are intended to produce new data. These generative models are a subset of AI techniques that create artificial data products, such as images, based on some underlying understanding of the data used to train it.

The series of DALL-E models developed by the research company OpenAI — and the free-to-use imitator it inspired, DALL-E mini — have pushed this concept into the public eye. These models generate an image from any written prompt and have set the internet alight with their uncanny ability to construct images of, for instance, Garfield inserted into episodes of Seinfeld.

You might think that astronomers would be wary of any kind of fake imagery, but in recent years, researchers have turned to generative models in order to create galactic forgeries. A paper published Jan. 28 in Monthly Notices of the Royal Astronomical Society describes using the method to produce incredibly detailed images of fake galaxies, which can be used to test predictions from enormous simulations of the universe. They can also help develop and refine the data processing pipelines for next-generation surveys.

Some of these algorithms are so good that even professional astronomers can struggle to distinguish between the real and the fake. Take this recent entry into NASA’s Astronomy Picture of the Day webpage, which features dozens of synthetically generated images of objects in the night sky — and just one real image.

Searching for serendipity

AI is also primed to make discoveries that we cannot predict. There’s a long history of discoveries in astronomy that happened because someone was in the right place, at the right time. Uranus was discovered by chance when William Herschel was scanning the night sky for faint stars, Vesto Slipher measured the speed of spiral arms in what he thought were protoplanetary disks — eventually leading to the discovery of the expanding universe — and Jocelyn Bell Burnell’s famous detection of pulsars happened while she was analyzing measurements of quasars.

Perhaps soon, an AI could join these ranks of serendipitous discoverers though a field of techniques called anomaly detection. These algorithms are specifically trained to sift through mountains of images, light curves, and spectra, looking for the samples that don’t look like anything we’ve seen before. In the next generation of astronomy, with its petabytes of raw data from observatories like the Rubin and JWST, we can’t possibly imagine what these algorithms might find.